Background

Sometimes you read a book that changes you. These experiences are few and far between, especially in the realm of non-fiction. Having read many books in my own field, in biology, in complex systems, and in philosophy, I will say few have moved me as much as this one. I can think of a couple, perhaps selections of David Deutsch’s Beginning of Infinity are up there, and as a package, Edelman’s Neural Darwinism nearly approached this one’s theoretical satisfaction, but neither came close to the immense gratification of this book.

Incomplete Nature, a tome at 670 pages, was published twelve years ago. Its writer, Professor Terrence Deacon at the University of California, Berkeley, is a cognitive scientist and biological anthropologist who has spent his career specializing in brain and language evolution, originally writing The Symbolic Species (1997), before later moving his career to complex dynamical systems inspired approaches for understanding the origins of life, mind, and meaning. Funnily enough, it is worth noting that seven years ago, when I was still a biological anthropologist and sending out applications for my first PhD program, I actually reached out to and had a conversation with Deacon about going to work with him. This was a period of time when I was interested in language evolution, and while I did not go to study with him (I went to and later dropped out of a different program studying different things), it seems a lot of my research interests have doubled back to the same place they started when I sent that email some seven years ago.

This book is a difficult book. I first tried reading it as an undergraduate student nine years ago, as a PhD student in anthropology again six years ago, and then this year as a cognitive scientist. Each time I attempted to wrestle with the book, I stopped short. During my first read, I wondered if I was too stupid to get it. During the second, I wondered if the author was intentionally obfuscating a thin theory. You will ask yourself what Immanuel Kant has to do with the origins of life. You will ask yourself what wind running across wires and water running down the drain has to do with the creation of the number zero. Passages like, “Thus the transition from a simple thermodynamic regime to a morphodynamic regime is marked by the emergence of orthograde tendencies toward highly regularized global constraints that run counter to the constraint-dissipation orthograde tendencies of the underlying thermodynamic processes”… will be taxing on working memory. You will be frustrated, but it will be worth it. Deacon is seemingly aware of the issues, at one point even writing, “If you have read to this point, you have probably found some parts of the text quite difficult to follow. Perhaps you have even struggled without success to make sense of some claim or unclear description….the more difficult the task of creation or interpretation or the more stimulating or frustrating, the more thermodynamic work tends to be involved.”

During this most recent read, I realize this is the difficulty of explaining dynamical systems verbally. Deacon wrote the book in an attempt to appeal to the general public, and in doing so does not include any equations. Many of the difficult to understand, or perhaps, difficult to retrieve, concepts are shorthand for patterns in dynamical systems: orthograde and contragrade tendencies; homeo-, morpho-, and teleodynamic systems; teleomatic, teleological, teleonomic ententionality and so on and so forth. Several times, the group of us in from my department who read this book together were begging a phase portrait or an equation instead of a verbal description. If these concepts are new to you, you will benefit from making a cheatsheet. If dynamical systems are more familiar to you, you will benefit from having a whiteboard to work some of these things out. But as I said, if you can stick around it will be worth it.

What is the book about? I will start first with what it isn’t so that those of you who are expecting this won’t go looking for it. It is not a book about how to locate consciousness in the brain. It is not remotely panpsychist in the least bit. It doesn’t review the literature on consciousness, talking about IIT, higher-order thought, or the minimization of free energy. It isn’t a book about linear steps and doesn’t waste its time proferring hereto unknown mechanisms telling us what the mind is and what the origins of life were.

The book starts with what is known. It does not propose there’s anything else out there to be found or located to explain what we see. It is a description of what we can do with material processes which are already known and the ways we can interpret these processes to get into things which are heretofore unexplained: life in the universe, consciousness in mind, and meaning in the world. While these three phenomena seem completely disparate, they are unified in Deacon’s framework as ententional phenomena: phenomena which are necessarily about, or perhaps, for the sake of something. Deacon’s book is therefore an accounting of how the known material properties of the world can be built up into the things which are apparently immaterial (hence the book’s sub-text, “How Mind Emerged from Matter”). It is an answer to the unanswered challenge that materialism has presented our world: if everything is material, why do we not have an account for how these seemingly immaterial properties of the world emerge?

This is the account Deacon provides.

There is a lot in this book of immense value. While you can skip chapter to chapter, it takes an explanatory arc where previous chapters build on one another and so, for better or worse, needs to be read linearly, as difficult as that is. I will try and summarize the book, but speaking specifically to the things which I found valuable. For me, there were a few: Deacon’s focus on a process ontology for describing non-decomposable phenomena, on information as a local process and work as a general description of entropy in both Shannon and Boltzmann terms, on the origins of life and how 3/4ths of the emergent properties of living systems may be more common in the universe than we suspect (telling us life may be more common than we realize), on why AI is non-sentient and almost entirely irrelevant to all of Marr’s levels except implementation, on the allure of neuroimagining, on his description of consciousness building off of and perhaps even completing Edelman’s, and on the relevance of this theory to concepts like meaning, transcendence, and philosophy.

I will therefore explain the book, as well as I can, in terms of the concepts which were important to me. Many of the concepts here have been described by others - in particular, I am aware of the work of Stuart Kauffman and autocatalytic systems, Varela and autopoeisis, and the controversy between Deacon and Juarrero (whose work I have also read and enjoyed). If I don’t reference these things, it is because I am building off of what was said in the book, not outside it. Similarly, if there are references to things like panpsychism, assembly theory, drifting representation, and Markov blankets and the FEP, they are mostly mine. What is incredible to me about this book is that it was written in 2011, and it has anticipated a lot. I will return to this point at the end in my post-script.

The Problem

The primary problem of this book is dualism. Ever since Descartes, philosophers of mind have struggled with how something like consciousness can emerge from material processes or how material processes create things like qualia, or subjective experiences. In recent years, a number of philosophers, such as David Chalmers, have retreated on the matter to admittedly become panpsychist dualists; others, like Dennett and Richard Rorty, have simply argued such properties are an illusion; and as our explanations from neuroscience have failed to get us any closer to the answer, we’ve seemingly been wallowing in the muck searching for answers (I know, IIT people will disagree and that’s fine).

In his journey for an explanation, Deacon attempts to take the problem head-on, attempting to show us how dualist experiences can emerge. His starting point for this is the homunculus problem. Described by philosopher Dan Dennett as the Cartesian theater, the homunculus problem holds that when we cast away Cartesian dualism, where subjective experience is something created by a soul, which itself is separate from the describable material process of the world, what we are left with is a homunculus in the mind. This homunculus equates to an observer, like a little man inside the brain or a Remy from Ratatouille, who monitors external experience as if it were a theater, making decisions for the machine it sits inside while somehow being independent from those material processes.

The problem with such ideas is they consist of “turtles all the way down”: if this homunculus is a decision-maker for the machine, what does this little homunculus consist of? Does this homunculus have a homunculus of his own? Are there homunculi within each homunculus in an infinite recursion of agency going all the way into the world? It seems indefensible, but some panpsychists might agree with such a view, seeing the world as constructed of particular, small consciousnesses which come together to produce larger consciousnesses in the world. Other, such as Dennett, simply say the way out of this is to suggest that consciousness is an illusion. That Descartes was completely wrong to suggest we have independent, self-knowledge, and that instead such coherency is something of an emergent kluge that has seemingly deceived us (to be fair, Descartes anticipated and already answered this argument, but no need to get into that).

Deacon forges a different path which rejects illusionism a la Dennett and magic stuff a la Descartes, proposing instead there is perhaps a way in which consciousness, or a homunculus, could be constructed. We start here with the concepts of zero, abscence, constraint, and a process ontology which forces us to reject things as kinds in themselves and to view the world instead as consisting of generative and dissipative forces.

Towards a Process Ontology

If we are going to argue about how mind emerges from matter, we have to discuss emergence. Deacon reviews concepts of emergence dating back to the 19th century emergentists and the sort of later conceptions of emergence we see in the contemporary field of complex systems.

Arguing at length, he comes to the conclusion that the way many in the public, and unfortunately, even professional philosophers have come to think of emergence is from an object-ontological view of the world. In this view, the world is constructed of things. Things can be put together in the same way. For John Stuart Mill, sometimes things could be put together in ways where their behavior was predictable from the outset. These were called homopathic effects, such as how the force of a vector in traditional Newtonian mechanics are simply the sum of the forces which constitute it. On the other hand, sometimes things could be put together in ways where their behavior were not predictable from the outset. Mill called these heteropathic effects. An example here is table salt, where two noxious chemicals, sodium and chloride, are combined to produce a novel material that is completely harmless and different from its constitutive parts. This further gets into Deacon’s distinction between systems which are reducible, or can be broken down into constituent parts, and systems which are decomposable, which can be broken down into parts and where those parts on their own represent the role they play in the rest of the system.

The problem with this view is one which has been sweated, and continues to be sweated, over by complex systems since the concept of emergence emerged. Why is biology not reducible to chemistry? Why is chemistry not reducible to physics? In fact, why is everything not reducible to physics? Is there truly a “strong” version of emergence in nature, or is it the case that most forms of emergence we see in the real world are simply us disregarding issues of supervenience as a matter of convenience? In other words, do I simply describe systems like evolution on the level of the gene because it is convenient to do so?

This critique of emergence, that everything more or less on an object level can boil down to the behavior of its constituent parts and that therefore new “things” do not emerge, is the reason why materialists resort to describing consciousness as something of an illusion. It can’t be described in any other way than the activation of neurons and it is difficult to argue that neurons have some level of consciousness to them. The flip-side of this coin is that there has been an immense proliferation of panpsychist view of consciousness: why shouldn’t neurons have some level of consciousness? Why shouldn’t the parts of those neurons similarly reflect this thing ur-component, as well?

This all makes the whole issue of the emergence of mind from matter intractable. If the critique that emergence is not real except from an observer’s perspective, then it either has to be the case that mind is not real or that it is a component shared by all things.

Drawing on the work by Mark Bickhard, Deacon sidesteps the issue by rejecting object-level ontology wholesale and resorting to a process ontology to describe these processes. Instead of thinking of emergent phenomena as being comprised of things doing things, it should instead be described as “doing” happening to and constructing things. This system of philosophy in the 20th century, which grew up with the work of Alfred North Whitehead, has since come to describe a particular concentration of complex systems identified with Nobel-Prize winning chemist Ilya Prigogine and his work on what has come to be called dissipative structures. For Deacon, the value-added here is not that it necessarily eliminates the issue of emergence from systems entirely, but that it instead provides a temporal element to systems, wherein processes beget processes, rather than things begetting things. You might call this causation. To reframe the age-old example in complex systems of rocks rolling down a hill: what matters not in this case that a rock carrying a set of atoms is rolling down a hill and that therefore the rock is supervenient on its comprising atoms (causing, in some top-down way, for them to roll down the hill), what matters instead is that a rock was pushed and this generated a bias for going down a hill. To measure that rock rolling down the hill, you do not need to account for the thing which pushed it, except to understand causation, but that causation does not constitute the new process - it is not being pushed ad infinintum such that being pushed is a part of rolling down a hill.

For processes, we therefore don’t need to describe parts-whole compositions at all. We sidestep strong and weak emergence and focus on causality. Deacon argues that while we can apply object-level descriptions to many human-constructed phenomena in the real world (for example, it is easy to both reduce and decompose the parts of a clock to understand what each part does), ententional properties lack the decomposability that other systems do. This is because these ententional phenomena are processes and processes are not decomposable to simpler processes, except in causality. This is critical, because if it is the case it means that theories of consciousness cannot rely upon the construction of new things themselves, but on dynamic and continuously shifting processes which shift from phase state to phase state, then the temporality of process causation means we won’t simply find a “thing” called consciousness, a “thing” called life, or a “thing” called meaning, but instead a causal-temporal process which exhibits causation on other processes.

In part, this may elucidate why the search from a thing constituting consciousness may have been a dead path all along. As Deacon notes with recent advances in neuroimaging, “Although these are some of the most exciting new techniques available, and have provided an unparalleled window into brain function, they also come with an intuitive bias: they translate the correlates of cognitive processes into stationary patterns.”

Dynamical Systems and the Emergence of Ententional Phenomena

Process ontology is the first buy-in required to extract meaning from Deacon’s thesis. Given a process ontology, we still need to explain why there are “things” in the world. Why does apparent stability emerge, why does a tennis ball look like a tennis ball, and a human-being looks like a human being. What is going on such that regularities exist?

Consider the human body. It is comprised of several trillion cells. Each of these cells have a bias for things like metabolism, reproduction, and survival. Why should the cells in my body choose to do what they are doing now rather than something else? At any given moment, they could take off on the venture of a lifetime, breaking from the codified norm and attempting these things on their own (and often, they do , we call this cancer). On a deeper level, I am constructed of a variety of chemicals, these chemicals are comprised of atoms, these atoms of other smaller things, and so forth, which could be doing anything. At any given moment, these cells, atoms, and part of myself do not do what they might otherwise like to do because of constraints. The behavior of EM fields constrains the parts of the atoms which constitute my body, the behavior of other atoms constrain the parts of the other atoms around them, their collective behavior goes on to constrain the behavior of other chemicals and so on and so forth.

At any given moment, while all these things could be doing what they would “like” to do, anything else in the universe such as floating about in the air, they are instead biased towards what they are doing now. They are constrained by particular processes into where they are now. The cells, atoms, and general field which constitutes a human being are therefore akin to a human-shaped hole in the universe: that processes building upon and forcing causality upon one another have pressed the behavior of a large group of forces into one self-reinforcing system. To bring it back to people, it is not the case that you are simply a random collection of parts, of atoms constructing cells, and so on and so forth, but instead a system of forces pressing on itself on multiple levels to self-propagate the furthering of these forces. Imagine yourself like a system of waves generating a system of waves generating a system of waves to eventually generate something more or less a human.

This is profound stuff. One of the students who read this chapter stated he had an out-of-body experience when we walked through it together. It seems anti-human. If I am merely processes, am I also a self? If everything is just biases, is there such thing as meaning? Deacon’s answers, which develop later and a large reason why this book is valuable are yes and yes: you can construct both self and meaning out of this processes-based approach and, in fact, it may be the only way to construct these things.

It is well and good to say everything is a process pressing on another process to create other processes. A lot of people, including many in Buddhist philosophy, already think this way. Yet from a process-based approach, it would be a mistake to simply think we should begin to think about a human walking, a car driving, or a water bottle on the table as some gravitational field pressing a human-shaped hole into an otherwise dissipative universe. Instead, we need to think about what constitutes a process in general. We need an accounting of how processes beget biases and how biases eventually construct life, consciousness, and meaning.

This is where Deacon goes straight into dynamical systems. It helps here to have a cheat sheet. If you are going to read the book after reading this blogpost and need one, I suggest you start paying attention to the following definitions and their relationship to work. Three words you will need to get into your head are: homeodynamics, morphodynamic, and teleodynamic. In particular, once you have buy-in on how thermodynamics give rise to morphodynamics, you will want to know the relationship between morphodynamic and teleodynamic processes. I am going to collapse the description of these processes to their related versions of work here before returning to what Deacon means when he refers to work in general.

Homeodynamic Processes

Homeodynamic processes are where a system is trending towards equilibrium. It is in an excited state and it is tending to get less excited. Things start hot, then they get cold. Heat transfers between two objects until they reach an equilibrium.

Homeodynamic processes are therefore our basic thermodynamic processes. Things are trending from an ordered, non-random state into a more disordered, random state. Such processes should be familiar to most who have done middle school physics, but Deacon introduces some vocabulary which is useful to understand.

The first, drawing from Prigogine, is this issue of dissipation. To go from an ordered state is to experience dissipation. Things generally become naturally disordered over time, but do not become more ordered over time. Sometimes, though, things become more ordered over time.

The movement from order to disorder is what Deacon calls orthograde change. To dissipate, to go with the flow, to spontaneously fall apart, to release constraint. The movement from disorder to order is what Deacon calls contrgrade change. To form structure, to go against the flow, to impose work, to increase constraint.

These cases, when a homeodynamic process, through its own dissipation, creates what we call morphodynamic processes. The creation of morphodynamic processes through the bias of homeodynamic processes is what we call homeodynamic work.

Morphodynamic Processes

When homeodynamic processes form constraints, it is generally the case that these are dissipative. Take for example, whirlpools, as in the case of water running down a drain. What you see is the formation of order for the sake of dissipitation. In the atmosphere, wind is created to push heat from one area of a gradient to another, creating a bias which ultimately leads to dissipation. When we often see order in the world in non-living, natural systems, these are the sort of dissipative structures we are examining. They are ordered in such a way as to lead to their maximal dissipation: both homeodynamic and morphodynamic systems are trending toward equilibrium. Homeodynamic systems do so slowly, but periodically they create a bias; things like wind, whirlpools, vector patterns so that they may do so quicker. They induce constraints, or metastability, to speed up the process of dissipation.

This is what a morphodynamic process is. But what is morphodynamic work? Let’s return to the example I gave above to the rock being pushed down the hill to produce a rolling process. Morphodynamic work is a morphodynamic process giving rise to another morphodynamic process. Consider the behavior of a tumbleweed. As wind, caused by a homeodynamic process, pushes this tumbleweed in a certain direction, the tumbleweed itself travels, having been pushed in the direction it is going by another dissipative structure. The tumbleweed will continue to travel, perhaps pushing other objects out of the way (thus producing morphodynamic work), and coming to rest at some point in the environment, pressing (homeodynamic) energy into that local environment.

Teleodynamic Processes

Both homeodynamic and morphodynamic processes are dissipating. They possess orthograde tendencies wherein whatever order they form leads to their own structural dissipation. Having no access to their own generating process, such systems create maximally dissipative structures so that they can fizzle out in a non-chaotic, ordered manner. These things can remain ordered for a long time, but are ultimately dissipative and non self-sustaining.

Deacon argues that things like life, consciousness, and meaning are processes in their own right as homeodynamic and morphodynamic processes, but that they actively resist dissipation, escaping from basic thermodynamic and equilibriating tendencies. It is not clear that such non-equilibriating biases emerge out of nothing. Instead, they must emerge out of something.

Deacon calls such processes teleodynamic processes. This arises from the Greek telos, or aim. Teleodynamic processes are aim-directed. They exist for a purpose - purpose not being something “out there” in the world, but something a system comes to anticipate, react to, and therefore come to negotiate expectations with in a reciprocal interaction.

Teleodynamic processes, in Deacon’s view, are coupled morphodynamic processes. Unlike the water in a toilet bowl, which simply dissipates into itself, a teleodynamic process is defined by mutual morphodynamic work. Given that morphodynamic work is a morphodynamic process giving rise to other morphodynamic processes, a teleodynamic process is at least two morphodynamic processes giving rise to one another. In other words, as one system dissipates, it performs work on another, which in turn, through its own dissipation of the work having been done on it, dissipates into each other. They are, in abstract physical terms, falling into each other to maintain the system.

But not only that, while a morphodynamic process can do work on another morphodynamic process, a teleodynamic process resists dissipation. It propagates its work back onto the system from which its own causality emerges to sustain its own self-propagation. This is why teleodynamics exhibit telos: in a teleodynamic process, each process exists for the sake of the other process. In Kant’s critique of teleology, “An organized being is then not a mere machine, for that has merely motive power, but it possesses in itself formative power of a self-propagating kind which it communicates to its materials though they have it not of themselves.” As noted by Deacon, “in Kant’s terms, each of these component processes is present for the sake of the other. Each is reciprocally both end and means. It is their correlated co-production that ensures the perpetuation of this holistic co-dependency.”

The Origins of Life

It is difficult to see teleodynamic patterns in nature: coupled systems which propagate simply “for the sake of” one another. They are also the result of morphodynamic processes which, themselves, are dissipative. This means such systems should be difficult to arise in nature, and even more difficult to maintain. Discussing the presence of these systems in the real world, Deacon turns to the origins of teleodynamic processes with regards to the origins of life to highlight what a minimally teleodynamic process may look like.

Drawing on the work of Stuart Kauffman, Deacon proposes that while many standard definitions of life requires minimally a system of autocatalysis (a chemical system where sets of catalysts are used to produce further members of that set ) and a structure of containment (thus creating an inside-outside distinction between the object and environment). Combining these two principles, you can consider that most organisms or living beings contain some level of self-assembly driven by autocatalysis (consider, for example, that your body and systems of cells continuously produce themselves using themselves). While such a description is ubiquitous to systems of life, it thus remains a mystery how non-living processes came to eventually develop a system of autocatalytic self-assembly.

Deacon thus proposes the concept of the autogen as the initial step. The autogen is, similar to Maturana and Varela’s concept of autopoiesis, is a self-generating system which exhibits enclosure. This system, perhaps unsurprisingly, can best be described as coupled morphodynamic processes taking place between the formation of polyhedral structural capsules which themselves combine with another and reassemble in different ways. As a toy example, consider a morphodynamic process which can develop structures such as Lego bricks and, through the bespoke components of their structures, combine these Lego bricks in reproducible ways. Furthermore, imagine that this combination of Lego bricks falls apart or breaks in half. Again, due to the bespoke components of these structures, the Lego bricks will likely reform in similar ways, with parts of the broken structures providing substrates for the addition of similar, regular parts over time. This substrate-structure linkage, which can evolve more or less randomly due to “competition” and filtering between more regular substrates over time can lead to pre-living, enclosed, autocatalytic structures more or less propagating through time. Most critically though, Deacon argues that the replication dynamics of the autogens make them, unlike typical homeodyamic and morphodynamic processes, functional. Their reciprocal linkage and replication therefore make them a minimal dyamical system. As noted by Deacon, “The autocatalysis, the container, and the relationship between them are generated in each replication precisely because they are of benefit to an individual autogen’s integrity and its capacity to aid the continuation of this form of autonomous individual.” Thus, explaining the minimal emergence of what we might call function (a step towards meaning) in the universe.

Deacon’s autogenic theory, which has not necessarily been proven in lab settings, is a bold how-possible explanation of the origins of certain characteristics of pre-life and function in the universe. Even more boldly, Deacon expands the theory to argue that autogenic stability offered a stable replicator in a pre-DNA world, replicating their morphology through their structural features, to which eventual energy cycling processes (e.g. ATP) and information replicating (e.g. DNA) features were added later, such that the origins of life was a process of Morphology Reproducers (MRs) giving rise to MRs + Energy Cyclers (ECs) giving rise to Morphology Reproducers + Energy Cyclers + Information Replicators. Considering that autogens are morphology reproducers absent of energy cycling, this means that while the universe may not be teeming with life, it may be teeming with autogenic structures in a relatively cold, at-equilbrium universe, only undergoing periods of replication whenever perturbed by the external world. Life, then, can be viewed partially as a morphogenic process with energy cycling further pushing this replicating teleodynamic process by keeping it in far-from equilibrium, refining its ability to cycle and reduce free energy, and allowing these functional processes to continuously add more while keeping it at a far-from equilibrium state.

A Theory of Information and Work

Deacon’s theory of function therefore does not rely on things like function simply being an observer-dependent phenomenon, but relies on a thing doing something to something else to elicit a cause. In the case of the autogen, as a minimal system, we can see how coupled processes can create processes which propagate for the sake of themselves. But most of what we come to understand as eliciting function relies on an understanding of meaning, which is observer-dependent. But the point of this book is that such things are, in a sense, real. We want to understand how mind, as a mental process, emerges from matter. To do this, we need theories of work and information which generalize for both physical and non-physical processes (people in my book club got stuck on this notion, but remember, this book is about processes, if mind is a process as much so as other physical phenomena exhibit processes, then process-level theories are sufficient for unifying physical and mental processes).

When it comes to work, homeodynamic work is orthograde action which yields contragrade results (something dissipates, but creates more ordered arrangements - as in how wind energy works); morphodynamic work is dissipation imposing change to create other morphodynamic processes in a orthgorade-orthograde direction, often creating a coupled morphograde systems, as in teleodynamic systems (e.g. in the case of the autogens, where constraints on each sub-component of the autogen are working with the constraints on the other sub-components to propagate one another; this is often the case also in some vortexes, which nevertheless dissipate); and teleodynamic work is work which leads to the restructuring of a system’s constituent processes to generate contragrade teleodynamics. This last part is hard to grok, but consider this: right now, I am producing writing, which is producing candidate solutions to potential meanings in your head, thereby constraining your thought processes in a specific direction, all while your brain is doing morphodynamic and thermodynamic work to produce these thinking processes. This system is teleodynamic, as the passive text, which is generating constraints, and your thinking, which is conducting the work, are currently coupled. The text is generating a great deal of work on your mental processes.

I discuss above the different forms of homeodynamic and morphodynamic work, but for the relevance of these concepts to mental processes is the role that information plays in generating work on mental processes. Canonically speaking, the concept of information has long been couched in the terms first outlined by Claude Shannon in his paper, A Mathematical Theory of Communication (1948). In his paper, Shannon outlines a number of metrics for understanding information in terms of the transmission of bits, the most important of which is our modern concept of entropy. At its core, Shannon information deals with the probability of an event occurring in some distribution. In this way, it is often described as a measure of “surprise” upon a certain bit of information being transmitted. Using probability distributions, Shannon entropy is a measure of the level of information gained from viewing all possible states of the variable.

Shannon’s measure is related to, although not the same, as Boltzmann entropy. Boltzmann entropy is a similar measurement which tells us about the number of possible states that a system can take place - high entropy entails a large number of possible states, implying a high degree of disorder and low entropy entails a lower number of possible states, implying a high degree of order.

I don’t have the time to go into the amount of overlap and non-overlap between these two forms of entropy (and thereby piss people off), but Deacon ties these systems together to try to understand better understand work. Shannon entropy, which relays to us a level of uncertainty about a system based on which states are present/absent, can be chained to Boltzmann entropy, which tells us about the work done on a system based on its order or disorder, to produce referential information. Referential information is the “aboutness” of a system, it is an inference of how information is produced based on the work done to it. If you are viewing a tree outside your home swaying in the wind, you understand how the tree in the wind will sway based on the fact that it is a tree. The argument is you can infer causality based on Shannon + Boltzmann, although it doesn’t stop here.

You can produce causal information based on Shannon + Boltzmann coupled systems, but this information says nothing about what information is useful when you generate candidate solutions. Let’s say you come home tomorrow and the tree swaying in the wind is now broken in half. What happened? You now enter a process of reasoning. This is where teleodynamics, and the process of thought, enters. Teleodynamic work takes potential Shannon + Boltzmann solutions and chains them to prior observations about reality. Processually speaking, what you would like to know is which answer, out of many possibilities, is right. This requires a linkage between the information-generating process and the referent. This itself is a teleodynamic system, wherein one’s expectations about the world produces candidate explanations, while the state of the world filters good ideas from bad. Deacon calls this system of information, where candidate solutions are constrained by their concordance with referents, Darwinian information. Put more simply, this is more or less like a system of Bayesian updating where you could imagine your expectation of the world produces an expectation, this expectation produces work, generating hypotheses; you encounter the expectation of this expectation, more work is produced, and the system generates constraints such that the referents you come to expect are producing constraints on what you think the referential processes are.

There are two values of this these sections, I think. The first is grounding information in fundamental, material processes, while also isolating it from some of the “universal theories” of information that are more popular now than ever. Many of these perspectives claim “information is fundamental” in the same causal sense that electrons zipping about in the world are fundamentally causal for producing universal, physical patterns. I am not an opponent of this sort of view, but I do find its nature somewhat annoying given what information is, which is a measurement. On the other hand, Shannon + Boltzmann chaining ensures that the nature of information is primarily physical and non-subjective, but that the process which produces this information is ultimately local. This is important for some people, but I won’t elaborate here.

The second is that Deacon’s view of information producing teleodynamic work, wherein teleodynamic expectations create further constraints, and where teleodynamic work produces and propagates further morphodynamic work “downwards” through the system. Because teleodynamic work requires morphodynamic and homeodynamic work to produce it, it means that teleodynamic work done on the level of teleodynamic minds resonates downwards through the morphodynamic and homeodynamic processes which produce them. In other words, the processes by which thought comes to be influenced by things as simple as text on paper has some level of downward causation on the energetic processes present in the brain which produced that mind. Simple as.

Sentience, Consciousness, Machines

Sentience is not just a product of biological evolution, but in many respects a micro-evolutionary process in action. To put this hypothesis in very simple and unambiguous terms: the experience of being sentient is what it feels like to be evolution. - Deacon

This last insight bit of insight is critical for the real target of the book: you. What does it mean to be a person? Is it the same as being a body? If so, does being a body entail being the same thing as a bag of cells? No, because you are not a body. The nature of conscious experience is process-based. The things we feel, the qualia of being, is fundamentally a process, not a thing. In the same sense that a ball striking a ball on a billiard table is a process and not the things themselves, or that Darwinian evolution is a process of continuous filtering of objects themselves, you, yourself, are a process.

The book takes a great deal of time to come around to this point. If you make it through the first 14 chapters, the last three are nothing but meaning-evoking. If you skip the first 14 chapters to read the last three, they are meaningless. The concepts presented thus far in this blogpost do the book little justice and probably do not help boil down the concepts any better than the tome itself. These concepts require deep elaboration. It’s impossible to understand them otherwise. But I will try and summarize the most elegant parts, the last bit of the book, as best as I can.

We now understand that teleodynamic processes emerge from morphodynamic processes which emerge from homeodynamic processes. These processes are interlinked to one another, but the causal processes of a teleodynamic process are not particulates of teleodynamic processes and, similarly, the causal processes of morphodynamic processes are not particulates of homeodynamic processes. All there is in these systems is cause and effect, wherein one cause institutes an effect, which can end there or institute another cause. Unlike panpsychism, which claims that there are bits of consciousness (a process) somehow inherent and hanging out in all things, Deacon’s rather simple claim is that if we view things as causally linked but otherwise object-level separable, it implies then that something like mind can sit on top of other processes, effect downward causation on those processes, and yet remain an independent construct, for the same reason that an individual with brain damage can exhibit serious alterations to certain parts of themselves but nevertheless maintain a level of coherent individuality.

So what is consciousness? Unsurprisingly, it is a teleodynamic process. But similarly much of the body is a teleodynamic process, with the vascular system altering its regulation based on feedback from the rest of the body and so on and so forth. So how is sentience different from the cybernetic regulation of the rest of the body? The answer is that our consciousness is a nested teleodynamic system. It is prediction of prediction. Unlike the vascular system, which responds in a clear input-output manner of what the rest of the system is doing, sentience adapts to external constraints imposed while generating internal constraints to facilitate that adapation. To invoke Fristonian terms, mind is the locus of agency at a bi-directional Markov blanket, monitoring both internal and external environment. Deacon argues this is the separating barrier between something like computation and mind. While artificial intelligence fulfill the first part, of adapting to external constraints imposed by the external world, it does not generate further internal, path-dependent constraints on itself: the computations done on a machine, while they can be adaptive, in terms of altering their systems of instructions, do not fundamentally alter the meaning-making mechanisms of the process itself (such that adaptive learning may alter code but does not alter the substance of the learner itself - its system is teleological, but not processually teleodynamic).

What’s radical about Deacon’s interpretation of mind is that it has committed philosophy of mind’s ultimate sin. It has reinvoked the homunculus, wherein the homunculus is a thinking, feeling, modeling, independent self, an observer of what is happening both within it and outside it, an observer of the process of thought. But unlike other invocations of the homunculus, Deacon’s homunculus has been constructed, rather than assumed. It is a dynamical, adaptive process which adapts both to external conditions, and in doing so, attempts to understand internal conditions - it knows, if it has a stomach ache, it should not do jumping jacks; it knows, if it only has $100 in its bank account, that it should not go on vacation with its friends. And while many of these processes, learning processes, rules, could be instituted without a mind, why is it, and how?

Which leads Deacon to the last chapter, attempting to come to an explanation as to how you are you. Core to understanding this is that while the mind is a process, it is a process instantiated on neurons, neurons which fire action potentials at one another, and attempt to reduce uncertainty in one’s environment. Sure, they’re probably using activation functions and accounting for non-linear logic, but the overall point is that something like consciousness is not simply the aggregate patterns of these neurons, but something overlaying them. Instead, consider consciousness as the process part and parcel of the math which neurons use to generate their computations. Mental processes are not simply the processes of “so-called neural networks” (as Deacon says), they are the entirety of the thermodynamic processes fulfilling the energy and metabolism of the 100 billion neurons of the human brain. To return to Edelman, they’re the aggregate patterns of fields of entire groups of neurons attempting to stay alive. The neurons in your brain have one primary function, to stay alive, and a secondary consequence, which is computation. This means that the processes underlying mind, are not simply computational, but fundamentally homeo- and morphodynamic. Mind sits atop both metabolism and computation.

We should then understand the emergent behavior of neurons, not as optimal neural networks, as we’ve come to attempt to instantiate computational processes in artificial neural nets (ANNs), but as selfish agents who metabolize energy and propagate signals when energy reaches sufficient thresholds. Similarly, due to the fact that neural networks are highly re-entrant (to invoke Edelman - meaning their computations are recursively interlinked), it means that the potential for noise to propagate through the system, producing dynamical chaos is extraordinarily high - meaning straightforward computation on the neural level, as we view it happening through neural networks, is extremely unlikely. Instead, Deacon argues, the brain is designed to propagate noise, different from the sort of computational processes we expect in our ANNs. This noise, which propagates through the system, engages with higher-level constraints and regularities as a consequence of lower level processes, and is therefore shaped, dynamically, through the reordering of activation patterns and metabolism in the brain. You might then consider this noise, the constraints imposed on it, and its propagation, the locus of consciousness, rather than the activations of the neurons themselves. Deacon therefore argues then that the brain should be viewed, not like a giant ANN, but instead as something of a giant population-level resonance chamber, wherein influence conducted on one part of the network spreads energy and activation to other parts.

This is what consciousness is. The brain, comprised of selfish neurons, propagates noise in its activation patterns; noise, which if were present in a computational process, would lead to its eventual breakdown, is instead the primary driver of neuronal activity at all scales. The noise of this system responds to the external environment, as a consequence of what is firing when. But this noise also responds to external conditions, as it is continuously receiving information from the outside world and dynamically re-adapting therein. Consider what your brain is doing while reading this blogpost - it is rearranging its fundamentally thermodynamic, metabolic processes to respond to passive patterns on a screen - it is being adjusted to the text to develop a system of understanding it while adjusting its internal system to comprehend said text - the metabolism of the neurons on their own do not cause this, but the emergent properties of the process of reading, do. This is entirely different from the sort of things that single-celled organisms do and from what our intestine does for the rest of the body. Deacon argues this dynamical process is why our emotions tend to get the better of us and, at times, maintain a hold of us despite us knowing otherwise - they are attractor basins in a nearly-fluid dynamical process we might call to come consciousness. In this sense, consciousness, then, should be viewed as a fluid dynamical process rather than as a set of logical, computational procedures. And indeed, Deacon returns to pre-computational visions of human psychology and qualia to discuss how before the advent of computation, the primary analogies used to describe human experience tended to be fluid inspired.

Viewing the mind as a fluid, dynamical system therefore follow, and it is in the last moment of these books that we are reminded that Deacon is a neuroscientist. In these sections, he outlines how lower-level homeodynamic and morphodynamic processes should influence higher-level thought, with suppositions on the metabolic origins of daydreaming and the emergence of differentiated mental representations as the brain engages in a system of metabolic adjustment and how external factors engage the brain’s ability to propagate noise to direct systems of focus. Deacon argues then that much of our focus on the empirical study of consciousness and experience, via the priveleging of neurons, may be misguided (recentering the importance of the glia, which constitute 50% of the adult human brain, as playing a critical role in conscious experience). His critique of fMRI and EEG studies hold then that while many have attempted to identify conscious processing utilizing these various neuroimaging techniques, they are misguided, as this search identifies not the generating mechanism behind conscious thought, but instead its consequences, in the same way that viewing footprints in the sand on a beach is an indication of behavior, but not the behavior itself.

The epilogue of Deacon’s book is interesting. He’s testing the waters on something which is not fully fleshed out, but is provocative if it is his case can be proved. If it is the case that mind can arise from matter, that we can assess the impact of the non-material on the material, and that we can understand the ways in which things as remote as ideas conduct work on the material things we call human beings, it may have deep philosophical implications. It may imply, in some way, that philosophy is real. Sure, we can compute as many far logical sequences as we want, but in a very fundamental way for human beings, these logical sequences are read, understood, and ultimately enacted on a teleodynamic system itself enacting change on simple homeodynamic and morphodynamic processes. The mind, conducted through material systems, itself has feedback onto the material systems in a non-trivial way.

Ultimately, though, let’s disregard the issue of bodies. Let’s think about mind as process. Consider next time, that when you look a person through their eyes, what you’re viewing is not the person in their body, but the gateway to the inference-generating and responding mechanism that sits inside it. In this sense, a person is not a person, but is a thing sitting inside a human being. You, yourself, would then be a dynamical system overlaid on the brain and body adjusting to and adjusting it. Like a system of waves or electrical systems, your experience is not the body you’re in or the neurons generating activation patterns, but the process whereby information from the outside presses against constraints on the inside and adjust to one another. In the words of Deacon, “the experience of being sentient is what it feels like to be evolution.”

You are a process.

Conclusion & After Deacon

This provocative book, through its identification of mechanisms, provides a great deal of food for thought. The person I was before reading it was very different than the person after. While many will gripe with Deacon’s account on the basis that he is positing a variety of mechanisms for generating consciousness without sitting down and identifying them, this is a problem present in virtually all accounts of consciousness (save, maybe the IIT, which will need much more work in the future in order to make its case). But what is so convincing about Deacon’s work is that he walks us from the real, physical to the alleged immaterial without invoking new systems of logic, without denying the existence of something that is very real (thought) as the illusionists have done, without alleging that consciousness or information are somehow magically fundamental to the universe, and without ever appealing to unknown phenomenon we have to go out there to look for.

As noted by Deacon, the book “The laws of physics remain unchallenged… [the theory] didn’t undermine any known physical principles, nor did it introduce novel, unprecendented physical principles… It didn’t even require us to invoke any superficially strange and poorly undrestood quantum efffects in order to account for what prior physical intuition seemed unable to explain about meaning, purpose, or consciousness.” Written in 2011, the book seems to be a predecessor to a great deal of work done in recent years on neuroimaging, such as the phenomenon of representational drift before it was fully described; on the use of greedy-agent reservoir networks for understanding how metabolic processing can lead to higher-level computational outcomes, as in the work of my colleague Ben Falandays; and it seems to anticipate many of the work conducted on the so-called Free Energy Principle, on which many readers will and still should feel divided.

I had several big takeaways from the book which have developed immense food for thought for me over the last few months. In particular, I have thought a great deal about the two themes of consciousness and experience as intrinsice to life and meaning as real.

Deacon’s framework outlines what it is that make a human and a computer different and why computation, but implementation, is what consciousness is. Without invoking magical carbon-stuffs, he makes a convincing case as to what things like sentience, feeling, and thinking are, and outlines that to be conscious means to have an active awareness and influence on your underlying process,two knowing your constituent parts and their health, and being able to enact change on them as much as they do so on you. His process-based account of self and sentience implies that a human is not something that can be uploaded to a computer insomuch as hurricane, while it can be described via a system of dynamical equations, isn’t something that can be actively uploaded and downloaded so easily. Instead, conscious experience is a temporally-bounded dynamical adaptive process, part and parcel to the forces influencing us externally here and now, rather than a static point in statespace or a series of algorithms implementing a “human procedure”. It implies that what we are is not just a perceiving being of two things we call “here” and “now”, but in a very real way, humans are that sense of here and now.

Second is Deacon’s explanation of meaning, and how, as long as it is perceived by a human, it is just as real as any other real force out there. In his case, ideas are things which take place inside and between people, not just solely in an outer world. In a teleodynamic process, we are not simply information-digesting machines which take-in and spit-out bits and pieces of the world, but instead are coupled to our philosophies and systems of meaning in ways that make ideologies, religions, and deities as exhibiting very real control on us, not only by altering our logical suppositions about the world, but by producing active change within the human system. In this way, Deacon’s framework gets as close to a theory of transcendence and feelings of “something else” out there as I can imagine, explaining our ability to be shaped by ideas such as meaning, beauty, and evil as a real process, rather than as the subjective, conceptual word games that the nihilistic and immaterial philosophies of the current age force us to presuppose.

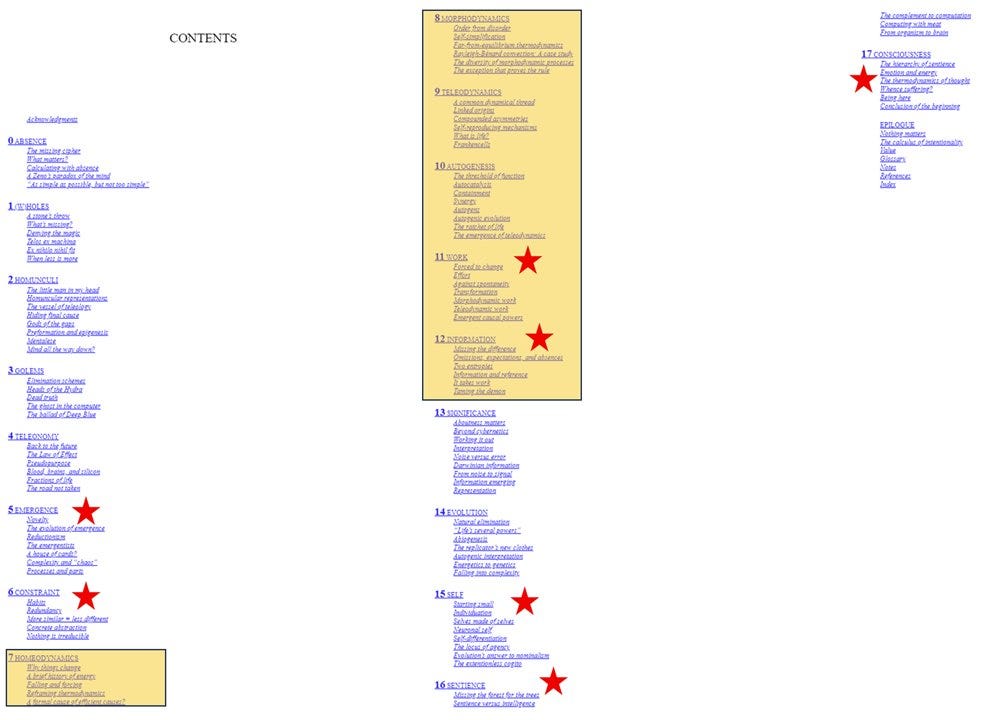

In any event, none of these points or subsections of this blogpost will have done the book full justice. Given the difficulty I, and others in my bookclub, experienced in reading it, I have likely gotten a number of things wrong. If any of these topics have interested you, I encourage you to read it for yourself. Below is something of a guide to the book. Red stars are, to me, the most critical chapters. What is highlighted in yellow is a difficult trek, but the most critical section of the book. That said, you should read the whole thing. Everything leading up to Chapters 11 and 12 are developing the argument you will read in those two chapters, which are necessary for understanding the rest of the book. Best of luck and happy reading.